1. Datasets

For this work a synthetic large-scale dataset, generated from MIDI files was created. The dataset is available in three different options: (i.) MIDI dataset synthesized as-is, (ii.) MIDI dataset with drum instrument onset count balanced between drum instrument classes under observation, for two class systems (ii.a.) m: eight classes, (ii.b.) l: 18 classes.2. Models

Trained models are available as an ensemble of CRNN networks each trained on one of the used datasets: MIDI, MIDI bal., ENST, MDB, and RBMA. Again two versions are provided, one trained for eight and one trained for 18 instrument classes. The models are packaged within a custom built madmom package. The executable can be found under madmom/bin/DrumTranscription. For execution details see built in help and documentation.3. Experiment Details

This section provides additional materials used for creating the dataset, as well as all the F-measure values for all combinations of training dataset/model/evaluation dataset, both as overall mean/sum values as well as on an instrument level. The dataset names are encoded in this way: the first set name always specifies the dataset on which the evaluation was run. A dataset name in parentheses specifies the dataset used for model training. For the cases of in-set cross-validation, no dataset name in parentheses is provided.4. Evaluation Plots

In this section, additional plots which did not fit into the paper are provided. They provide evaluation results for all datasets when missing in the paper.4.1 Instrument Distribution

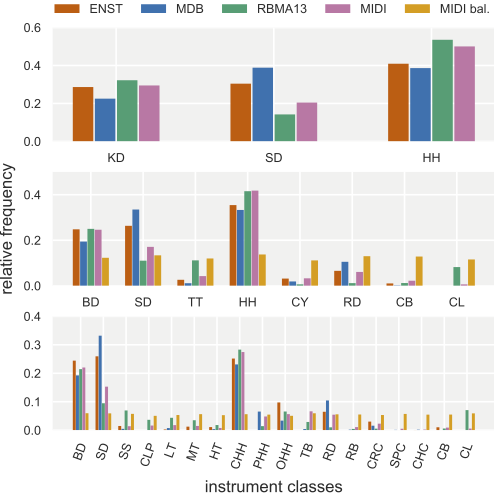

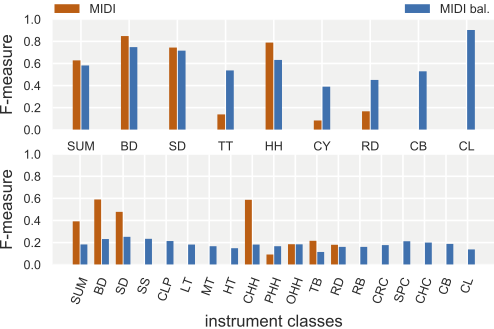

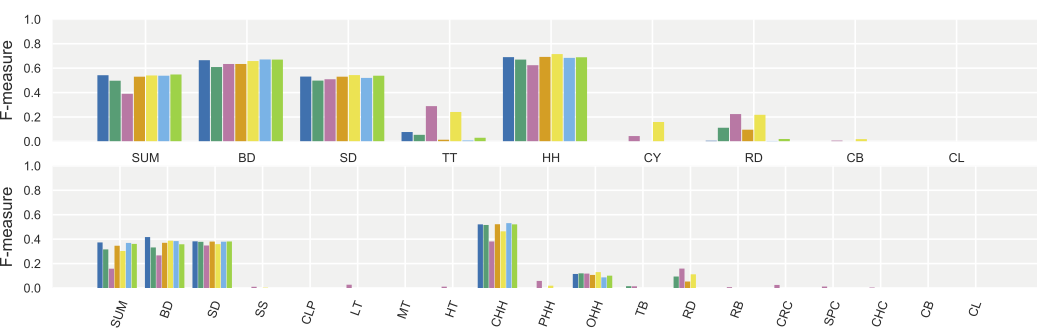

4.2 Performance on Balanced Sets for CNN

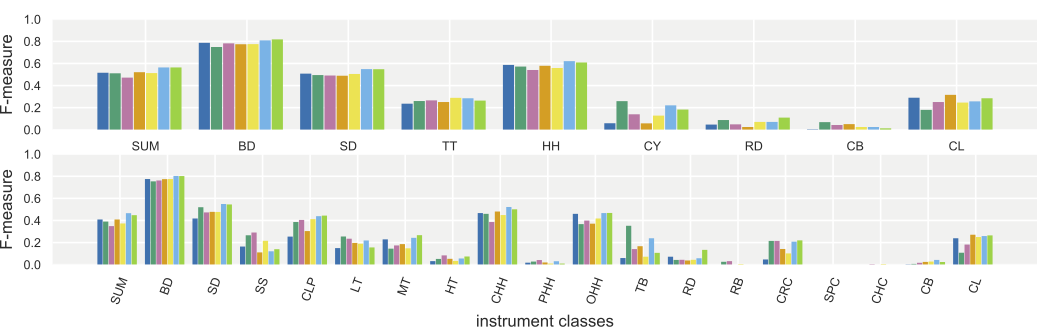

Instrument class details for evaluation results on MIDI and MIDI bal. for eight and 18 instrument classes using the CNN model. First value (SUM) represents the overall sum F-measure results.

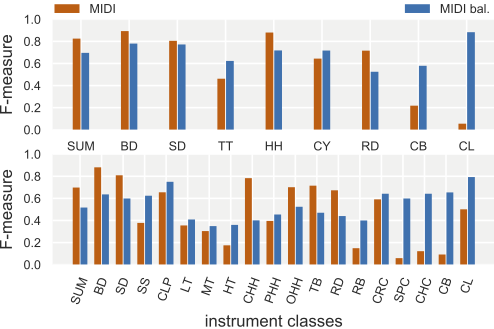

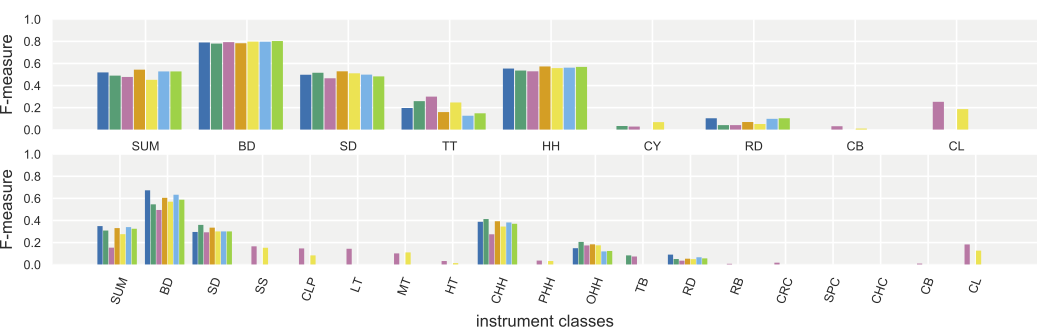

4.3 Performance on Balanced Sets for CRNN

Instrument class details for evaluation results on MIDI and MIDI bal. for eight and 18 instrument classes using the CRNN model. First value (SUM) represents the overall sum F-measure results.

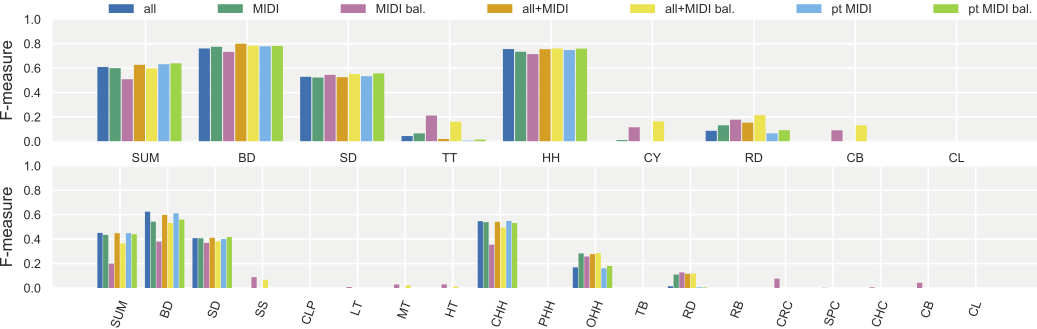

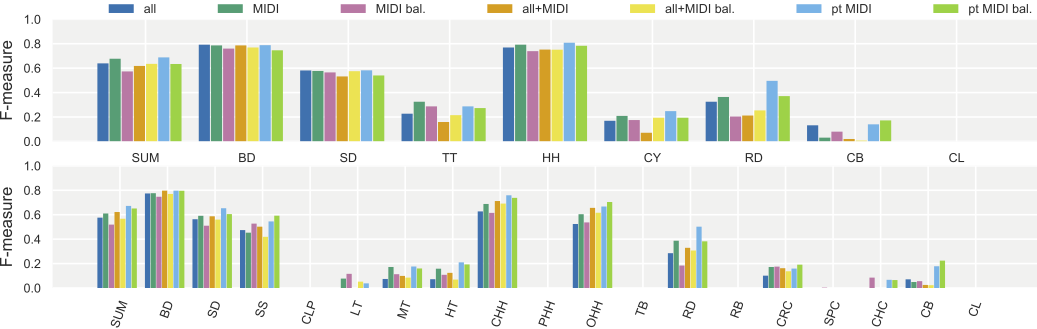

4.4 Performance on Real World Sets for CNN

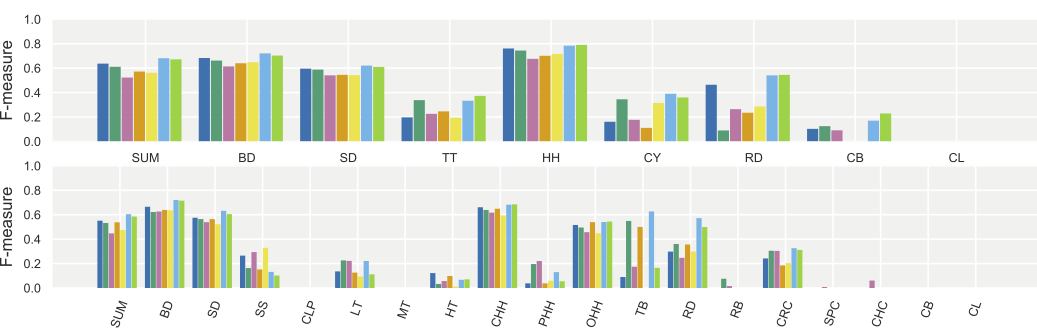

These plots show F-measure results for each instrument, for both the 8 class (top) as well as the 18 class (bottom) scenarios, on the different datasets. The color of bars indicates the dataset or combinations trained on: all---three public datasets; MIDI---synthetic dataset; MIDI bal.---synthetic set with balanced classes; all+MIDI---three public datasets plus 1\% split of synthetic dataset; all+MIDI bal.---three public datasets plus the 1\% split of the balanced synthetic dataset; pt MIDI and pt MIDI bal. pre-trained on the MIDI and MIDI bal. datasets respectively, with refinement on all. The first set of bars on the left (SUM) shows the overall sum F-measure value. Some datasets are missing drum instruments from the class system, in these cases the corresponding F-measure value is per definition 1 (if the model does not generate false positives). To clean up the chart, these bars have been removed, therefore for missing classes (like CLP for ENST) F-measure values appear to be 0. Especially in the case of the 18 class scenario using the CRNN, pre-training seems to produce slightly better models which are capable of detecting underrepresented classes while performing well on the other classes. In general, it seems the balanced sets are more problematic for the CRNN, which might indicate that the CRNNs indeed start to learn patterns, which are not representative in the balanced sets.ENST

MDB

RBMA

4.5 Performance on Real World Sets for CRNN

Same as above, but for CRNN models.ENST

MDB

RBMA

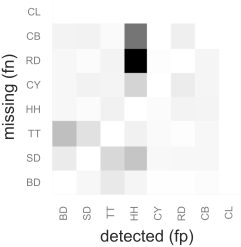

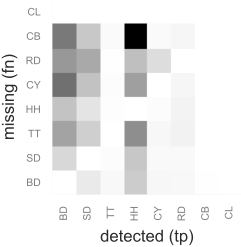

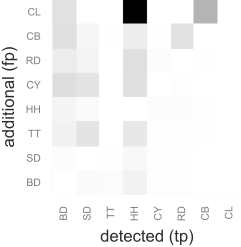

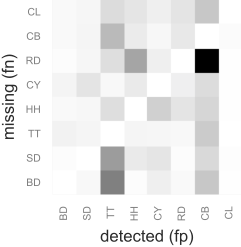

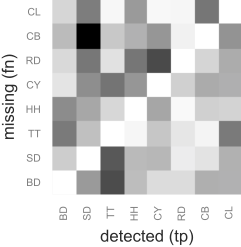

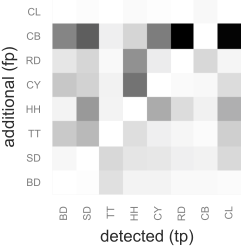

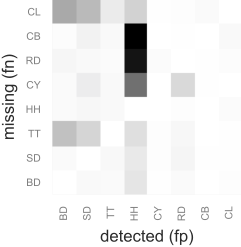

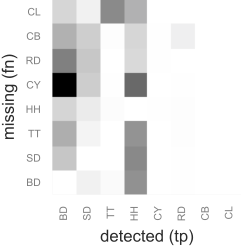

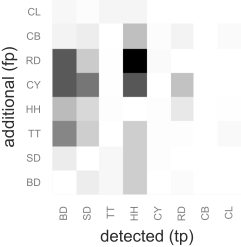

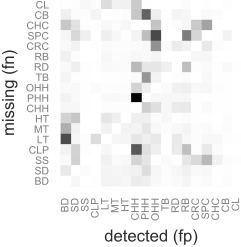

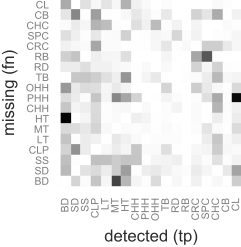

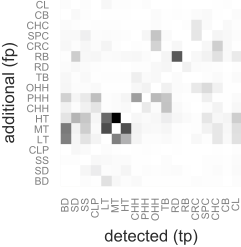

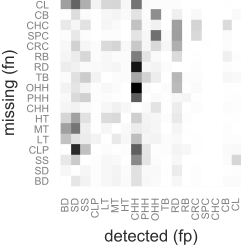

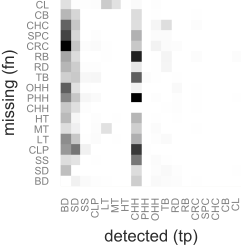

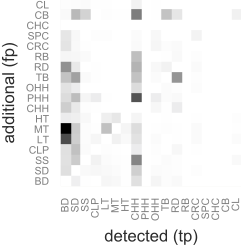

5. Confusion Matrices

To gain more insight into which errors the systems make when classifying within the eight and 18 class systems, three sets of pseudo confusion matrices were created. These indicate how often (i.) a false positive for another instrument was found for false negatives (classic confusions); (ii.) a true positive for another instrument was found for false negatives (onset masked or hidden); and (iii) a true positive for another instrument was found for a false positive (positive masking or excitement).5.1 CRNN on MIDI (8 classes)

5.2 CRNN on MIDI bal. (8 classes)

5.3 CRNN on all+MIDI (8 classes)

5.4 CRNN on MIDI (18 classes)

5.5 CRNN on MIDI bal. (18 classes)

5.6 CRNN on all+MIDI (18 classes)

6. Splits Definitions

In this section, definitions for splits for three-fold crossvalidation for each of the used datasets are provided.Splits for ENST

Splits for MDB-Drums

Splits for RBMA 13

Splits for synthetic MIDI dataset (full and 1%)

dataset

dataset  spreadsheet with statistics for switched instruments for class balancing

spreadsheet with statistics for switched instruments for class balancing  raw results output of experiments

raw results output of experiments