Vienna University of Technology

Institute of Software Technology and Interactive Systems

Documenting Virtual Worlds

Introduction

This webpage presents approaches to the challenges of archiving virtual worlds, approaches that focus on a natural documentation technique to produce information that is similar to modern TV and picture documentary products. Two different solutions are proposed, which allow us to gather information the same way regular users perceive the world around them. One of the solutions is specific to Second Life. We create and manipulate an object within the engine of Second Life to have it move through the virtual world and detect points of interest based on user activity and we perform a visual recording of such points of interest. As this method is intrusive and relies on specific features offered by Second Life, we also present an approach that is independent from the observed virtual world. The character is moved using an external helper program, which also grabs the presentation on screen and uses histograms to detect if the player gets stuck.

Preserving Second Life

General Information

The original goal of the experiment was to build a camera drone inside the Second Life world, using the tools for content creation provided by the regular SL Viewer, and to automate the drone to traverse the world and record still images or video footage while it does so.

Camera control is only possible for objects attached to an avatar or having an avatar sit on them. We thus implemented a solution relying on integrating a scripted camera object in the SL Viewer ("HUD" in the SL terminology), merging it with the viewport of the current user, which allows the camera object to take control of the viewport and manoeuvre it around. Using this approach, we are able to remote control our camera object in the virtual world. While we move the camera, the avatar which is being used to attach the camera to will remain stationary. To other participants in second life, the camera object will be invisible. We turn our camera into a so-called "HUD attachment", a special mechanic which allows the SL Viewer to integrate scripted objects in the visual display of the avatar. Originally intended for game play elements like radars or life gauges, it allows us to take control of the user viewport and manoeuvre it around like a floating camera, without the need to move the avatar. Using the script we are able to automatically move the camera thus recording scenes of interest (e.g. hotspots with many avatars) automatically without manual supervision.

The solution allows us to move a camera automatically in Second Life. The resulting images can be recorded for preservation purposes. However the solution is limited to areas in Second Life where scripting is enabled.

The screenshot below illustrates how our system captures SL visuals. The only difference to a regular SL viewport is the red circle in the upper right corner, which is the placeholder object that we scripted and anchored to the HUD. This way, the information that will be recorded will be as close to what a SL user would be perceiving in a first-person perspective as possible.

(Click to enlarge)

(Click to enlarge)

Camera Script

camera_control.txt [txt, 7kb]Example Videos

binding script to Avatar's HUD [avi, 6.5mb]

Activating camera and moving it independent from avatar. [avi, 12.9mb]

Recording other users using the flying camera. [avi, 62.1mb]

Future Work

For future work, we propose to use an array of fixed sensors positioned in a grid across the SL area that shall be archived. The sensors will be positioned inside phantom objects that the SL viewer will not render, thus being invisible to the users, and will continuously monitor user activity around them. The sensors will then communicate their findings to the HUD attached camera, and the camera will evaluate the results and navigate towards the one hotspot that it computes as the most interesting based on metrics such as avatar numbers in the proximity. Using such a phantom sensor array requires set-up in advance and visible information to the area visitors about the archival project, of course. Assuming we want to cover a regular SL 'sim', which is a land area of 65.536m2 contained within a square with a 256m edge, an exemplary archiving setup could look like the one depicted in the figure below.

(Click to enlarge)

While the method shown here is viable as a prototype, it is however tied to the fundamental restriction of requiring the "run scripts" permission within the game world. During initial studies we found a large number of areas where scripting was prohibited. Given the implications for the privacy and intimacy of the involved users, and taking into account the restrictions we encountered, we propose our method as the method of choice for obtaining automated coverage of events of interest, in the same way a camera crew would record those events in the real world. The main benefit of our method lies in the automated operation once set-up is complete. The methodology further supports monitoring heuristics with differing archival priority settings if a proper sensor network would be set-up.

Both video and image capture can be enhanced by automatically combining it with a form of geotagging, i.e. by adding information regarding the location where the information was acquired to the image/movie data. This can be achieved both via metatags or naming conventions. Since filming/screen-shooting has to be done via third-party utilities when using the official SL viewer, tagging has to be performed by the capturing application, at least in a basic form that allows for post processing. A possible approach is to devise a controller application which feeds data to the SL viewer in the form of typed commands, the same way a human player would add comments.

There is also the perspective of using a specially compiled version of the Second Life client and adjust it to archival needs, given that the source code is available under the GNU GPL license. Such an approach would solve many of the inconveniences we found while trying to work within the current official version, which has obviously not been targeted to archival needs. Most notably, this would allow direct processing of SL environment data and enhancements to avatar or vehicle control.

PlanesWalker

General Information

As the presented solution for Second Life depends on features offered by the virtual world a virtual-world independent approach for moving the avatar was suggested. This approach should meet the following requirements:

- a platform independent solution should be developed

- the possibility to interact with different virtual worlds without having pre-knowledge of them has to be given by simulating user actions from outside the actual virtual world client

- orientation in the world via collision detection should be done by comparing histograms of the taken screenshots (based on the assumption that the screen would change less when the avatar is no longer able to move forward). In case of a collision the avatar should turn to a different direction.

The result was the java program "PlanesWalker", developed as a student project between October 2009 and January 2010 by Michael-Alexander Kascha. PlanesWalker is controlling the avatar in a virtual world simulating button presses and taking screenshots of the virtual world using the java.awt.Robot class. External screen recording programs can be used to make videos of a virtual tour through the area.A limitation of the program is that the virtual world client has to get the commands for movements from the operating system event queue. If a more low-level detection of input is used (e.g. Second Life, Everquest), the PlanesWalker application is not able to move the avatar.

Usage

PlanesWalker is then controlling the avatar and recording in the selected documentation mode until the walk duration is reached (a red countdown of the remaining time is shown on the Start-Button), or until the Stop-Button is pushed.

Program Settings

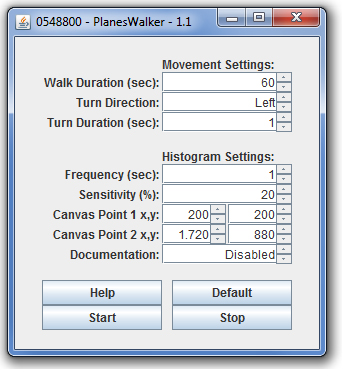

Movement Settings

Walk Duration determines how long the program should control the avatar.

Turn Direction changes the turn behavior once a possible obstacle was detected, possible options are: left, right or random.

Turn Duration lets the user set how long the avatar should turn, before walking straight again.

Histogram Settings

Frequency sets the interval, how often a histogram comparison for possible obstacle detection should be done (while walking straight).

Sensitivity is a treshold value. If the difference between two successive histograms falls below this value, the turn behavior is triggered.

Canvas Points 1 & 2 allow to adjust the screenshot area that is used for histogram creation, by setting two opposite corners of a rectangle.

Documentation mode offers the option to enable textbased and/or screenshot documentation of the avatars behavior. If this option is enabled the program will create a subdirectory with the current date and time as name to save the documentation files in.

Example Videos

The following example videos were taken using an external screen capture program, PlanesWalker was used to control the avatar's movement in the virtual world.Bree-Town in Lord of the Rings Online - 2010-01-20

Getting in and out of a dead-end corner with a chicken in it, walking past the Prancing Pony Inn, and showing at 1:39 how a too low Frequency, or a too high high Sensitivity value can cause some unexpected turning when then landscape doesn't really change.

PlanesWalker 1.1 in Lord of the Rings Online [avi, 8.8mb]

Northshire Valley in World of Warcraft - 2010-01-20

Getting away from a city wall and a bush blocking the path, walking over some small hills and back into the civilization.

PlanesWalker 1.1 in World of Warcraft [avi, 8.2mb]

Downloads

PlanesWalker 1.1 (2010-01-20)executable jar file [zip, 13kb]

java source code [zip, 10kb]

Further Information

A paper on the subject of Second Life preservation was presented at the 9th International Web Archiving Workshop (IWAW 2009):

Documenting a Virtual World - A Case Study in Preserving Scenes from Second Life (Mircea-Dan Antonescu, Mark Guttenbrunner, Andreas Rauber).